In the ever-evolving digital Internet environment, it is crucial for marketers, webmasters, and SEO managers to have powerful tools to analyze websites and collect important data. One of these tools is Netpeak Checker. In this article, we will take a look at what kind of tool it is, what problems it solves, and its main features and functionalities that can assist in efficient SEO analysis of your website.

What is Netpeak Checker?

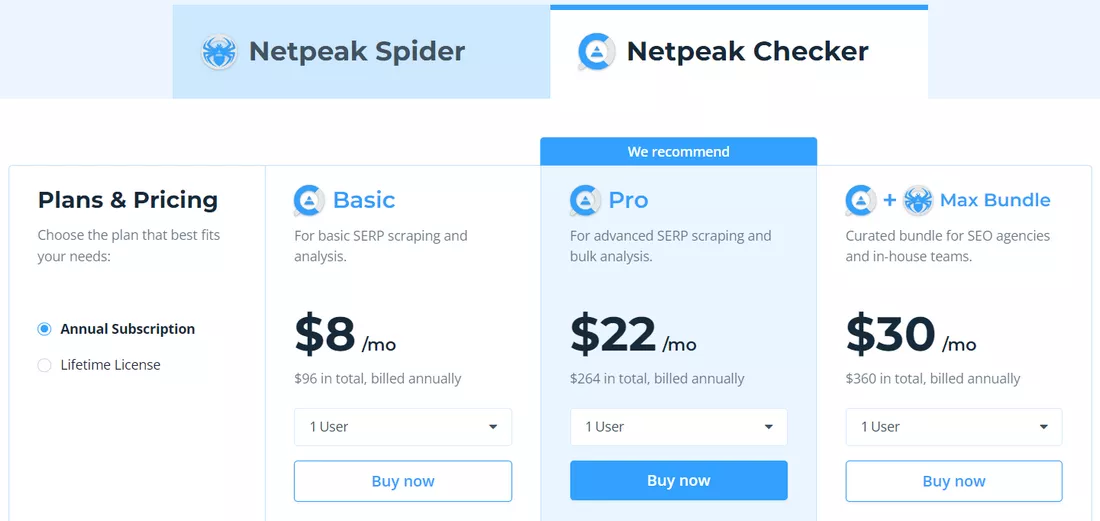

Netpeak Checker is a comprehensive tool for analyzing important website SEO metrics. By providing information about your website and those of your competitors, the program helps you make informed decisions to improve your online presence and attract even more customers. Netpeak Checker is a paid tool, but its price is relatively low (especially considering its multitude of functionalities). There are several pricing plans available, and the free trial allows you to familiarize yourself with the interface and test the operation of basic functions.

What problems does Netpeak Checker solve?

Netpeak Checker is designed to help users investigate important website metrics and get useful SEO data, including:

- information about the On-Page status of the website:

- server code and response time;

- content of Title, Description, Robots, Canonical, Hreflang tags;

- availability of website pages in Robots.txt;

- number of internal and external links;

- the presence of redirects, their number, and the URL of the final redirect chain;

- content of subheadings H1 – H6;

- size of HTML pages;

- the number of characters and words on the website pages.

- parsing of contact information:

- number and value of Emails;

- number and value of phone numbers;

- number and links to messengers (Telegram, WhatsApp, Viber, WeChat);

- number and links to social networks (Facebook, Instagram, Linkedin, Twitter, YouTube, Pinterest).

- DNS data:

- IP;

- country;

- continent.

- Whois information:

- date of registration and expiration of the domain;

- root domain.

- data from popular SEO services:

- Serpstat;

- Ahrefs;

- Majestic;

- Moz;

- SimilarWeb;

- SEMrush.

- data on website traffic:

- total number of visitors;

- traffic distribution by channels (Direct, Search, Display, Referral, Social, Mail).

- data from the indexes and results of search engines Google, Bing, and Yahoo:

- the total number of pages of the website in the index;

- presence of individual pages in the index;

- Title, Description, and URL values from the search snippet.

- information about the structure data on the website:

- types and number of structure data schemes used on the website;

- errors and warnings of structure data schemes.

- Core Web Vitals (CWV) values:

- Score;

- LCP;

- FID;

- CLP.

- Evaluation of the website's convenience for viewing from mobile devices (Mobile Friendly).

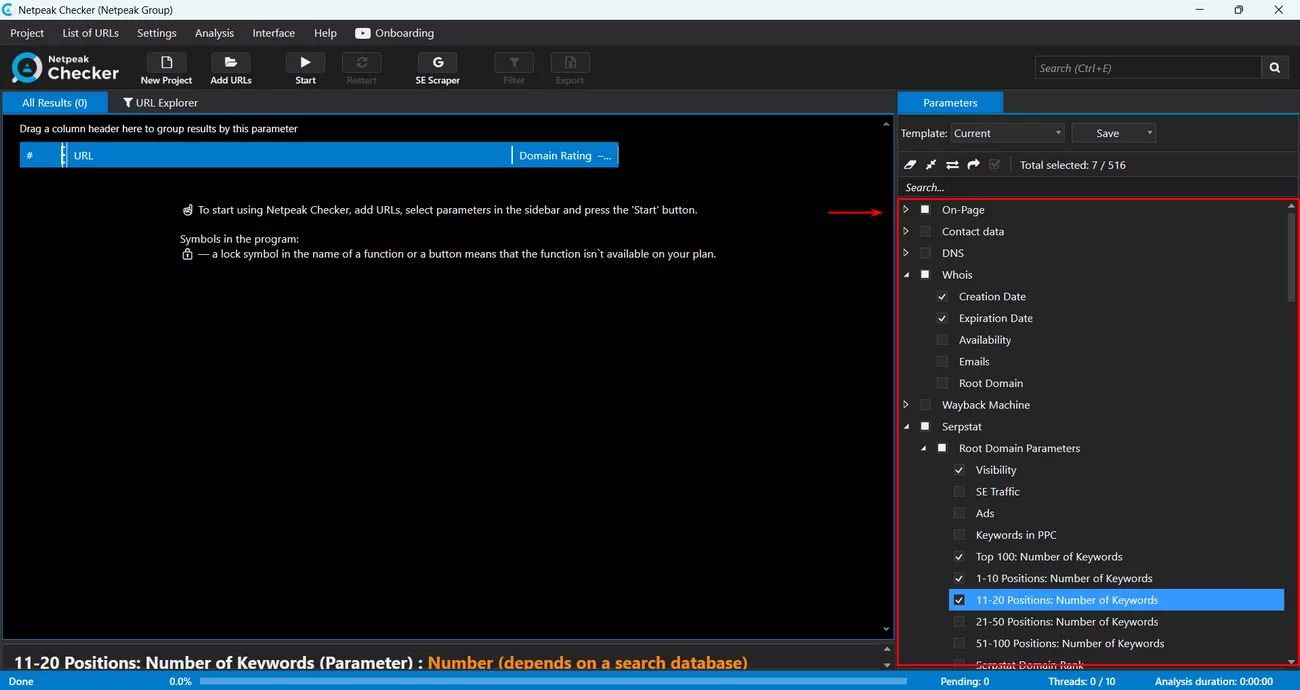

All the metrics described above are conveniently collected in the sidebar on the right side of the program interface. To get the data, just check the corresponding boxes:

Netpeak Checker is especially useful during SEO at the website development stage, helping ensure that the site is technically optimized and compliant with search engine requirements from the ground up.

Features of Netpeak Checker functionality

Next, let's take a closer look at some of the notable features of Netpeak Checker.

User-friendly interface

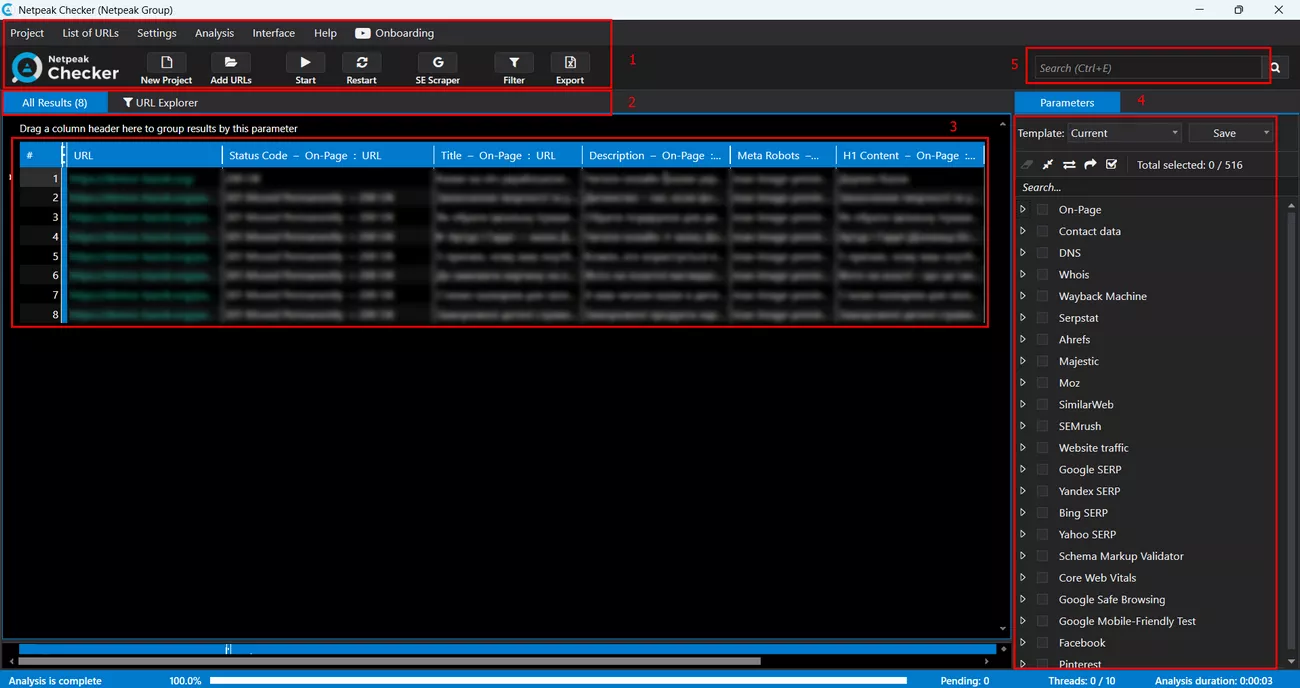

The Netpeak Checker interface is user-friendly and intuitive:

- At the top of the window, there is a command panel with basic operating commands: creating, saving, and loading projects, exporting and pasting website URLs, exporting and importing data, program settings, etc.

- All data is conveniently divided into tabs with the possibility of filtering.

- The results are presented in an easy-to-read tabular form.

- On the right sidebar, a convenient drop-down list shows all the metrics available for analysis.

- Above the sidebar is a search bar to find precisely the information you’re searching for.

Project-based work format

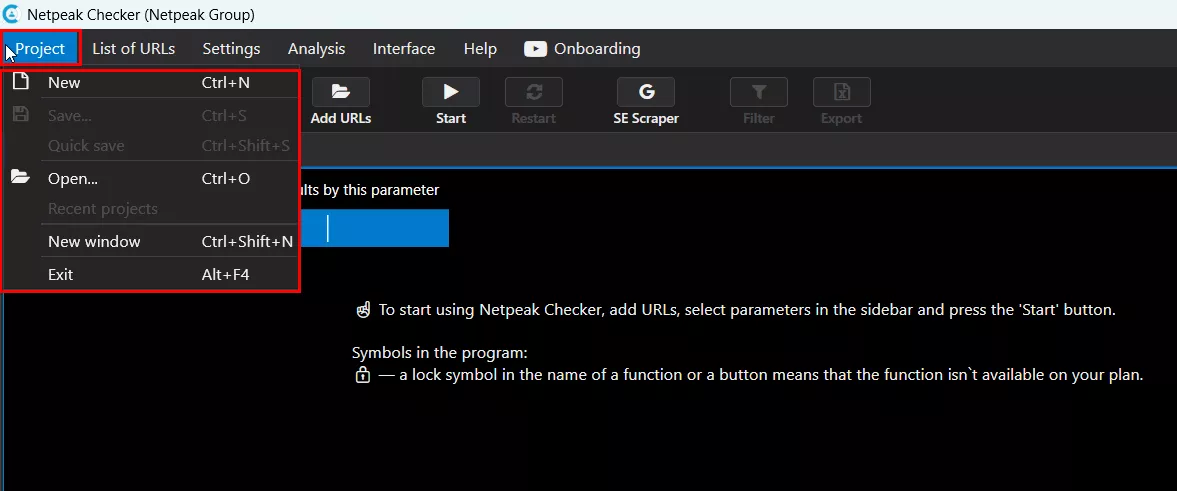

Data is processed on a project basis. For each data set, a separate project is created and can be saved, exported, and imported. Netpeak Checker can also be opened in several windows, which allows you to work with several projects simultaneously. All project operations are available in the "Project" tab of the command panel:

Convenient data filtering

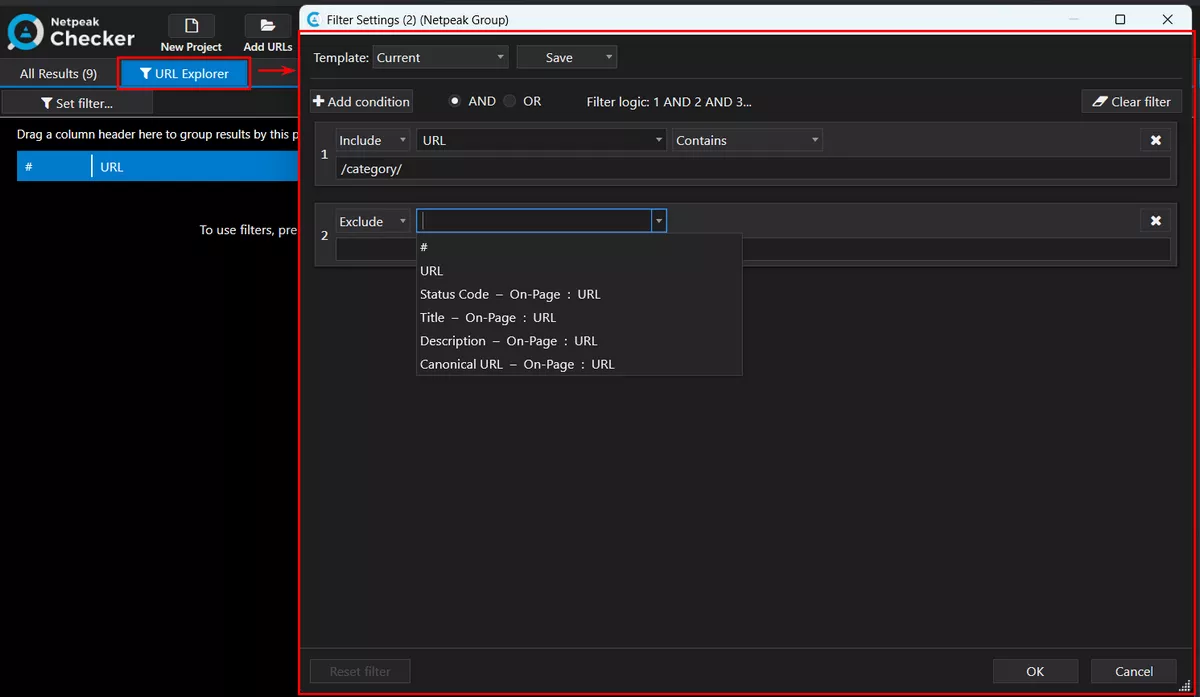

All data can be filtered using the "Include" and "Exclude" operators by selecting the necessary metrics and specifying the filtering conditions:

Quickly search for data

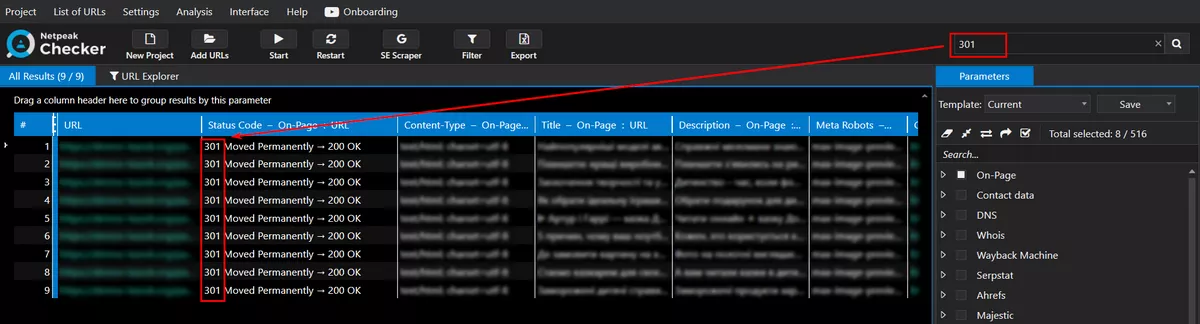

To filter data quickly, use the search bar. Simply enter the required search term, which will automatically filter all data:

The search is performed across the entire table with metrics.

Integration with Google Drive

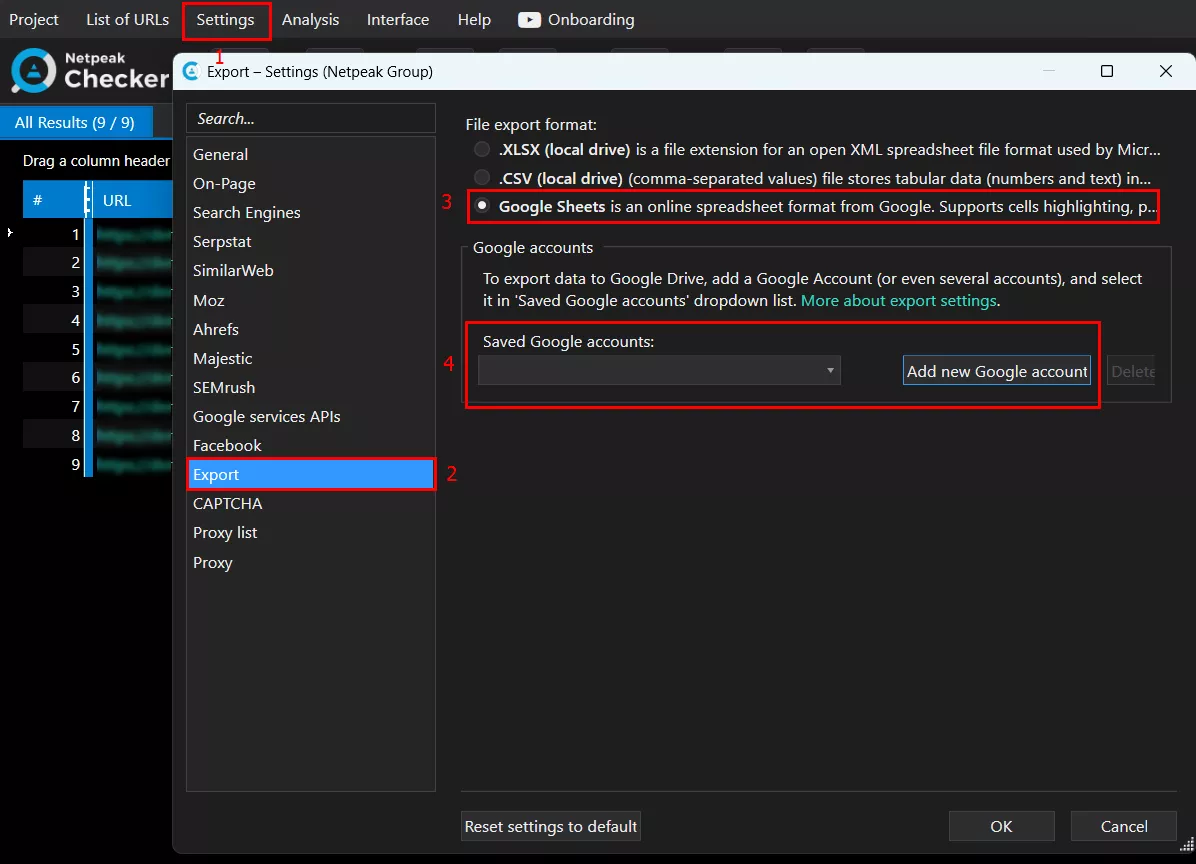

It is worth noting the functionality of exporting data to Google Drive, which allows you to save all of the results of the analysis in the Google Sheets format. To do this, log in to your Google account and select the required export method in the program settings:

Parsing of search results

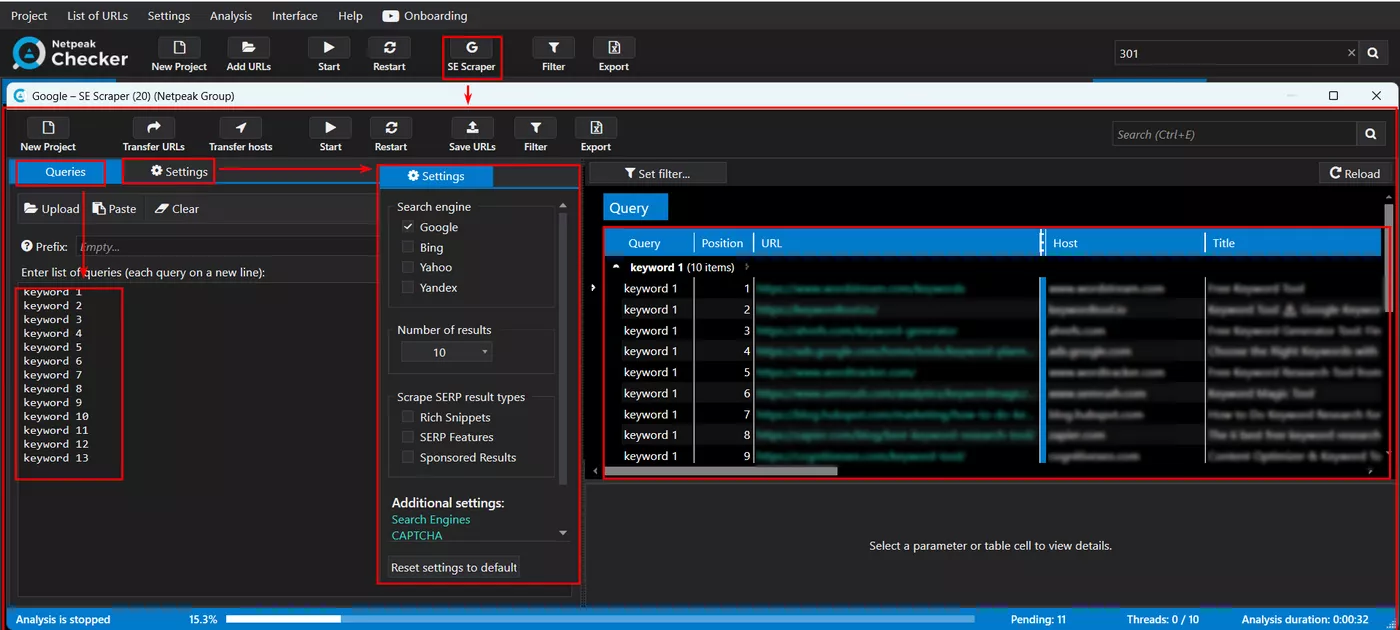

Another important function of the program is the ability to parse search results, which allows you to search the TOP-1, TOP-3, TOP-10, and TOP-50 results of Google, Bing, and Yahoo search engines by a list of key phrases. To do this, just insert (or import) the necessary keywords and configure the search engine settings. All search results will be displayed in a straightforward tabular format that can be exported for further analysis:

For uninterrupted parsing, I recommend setting up a proxy or anti-captcha, which we'll discuss below.

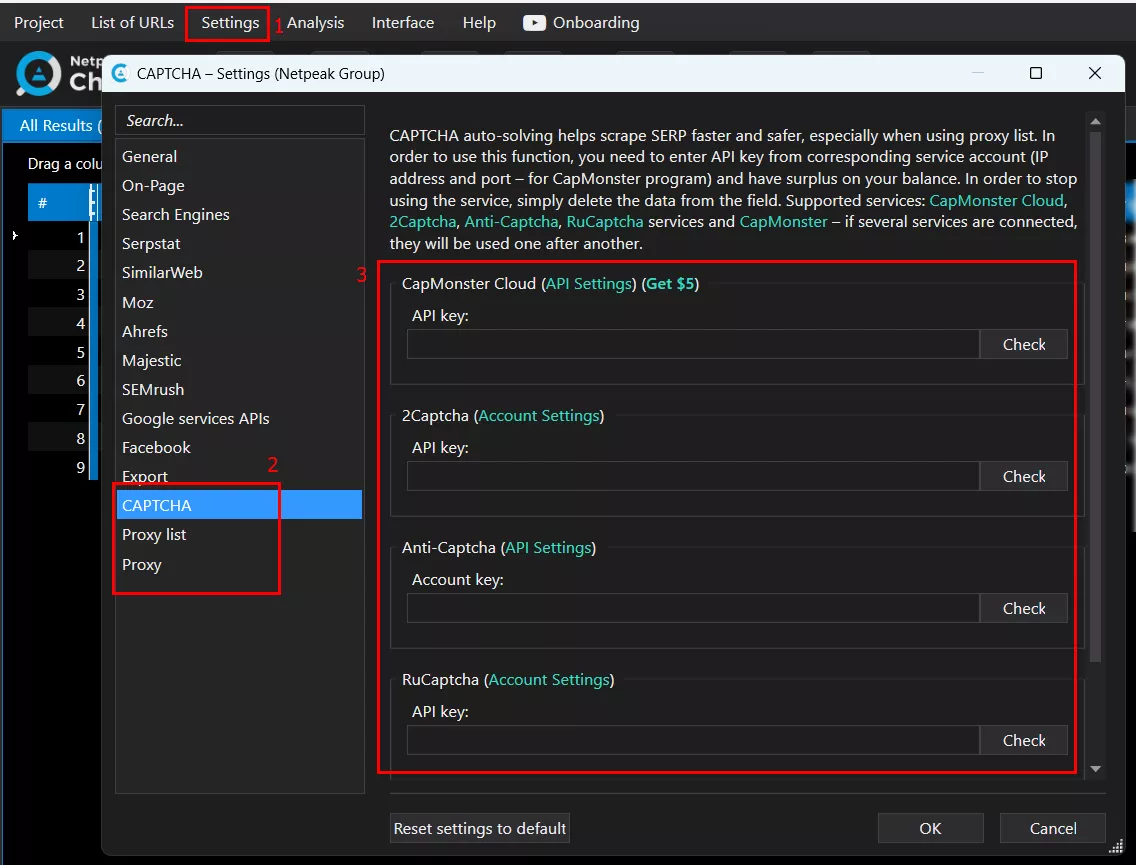

Using proxies and anti-captcha

To ensure uninterrupted parsing of data from websites and search engine results, Netpeak Checker allows you to use proxy servers and APIs of popular services to automatically decrypt captcha. To do this, just fill in the necessary fields in the program settings:

At the time of writing, Netpeak Checker supports the following anti-captcha services: CapMonster Cloud, 2Captcha, Anti-Captcha, RuCaptcha, CapMonster. For greater effect, you can use the APIs of several services in parallel.

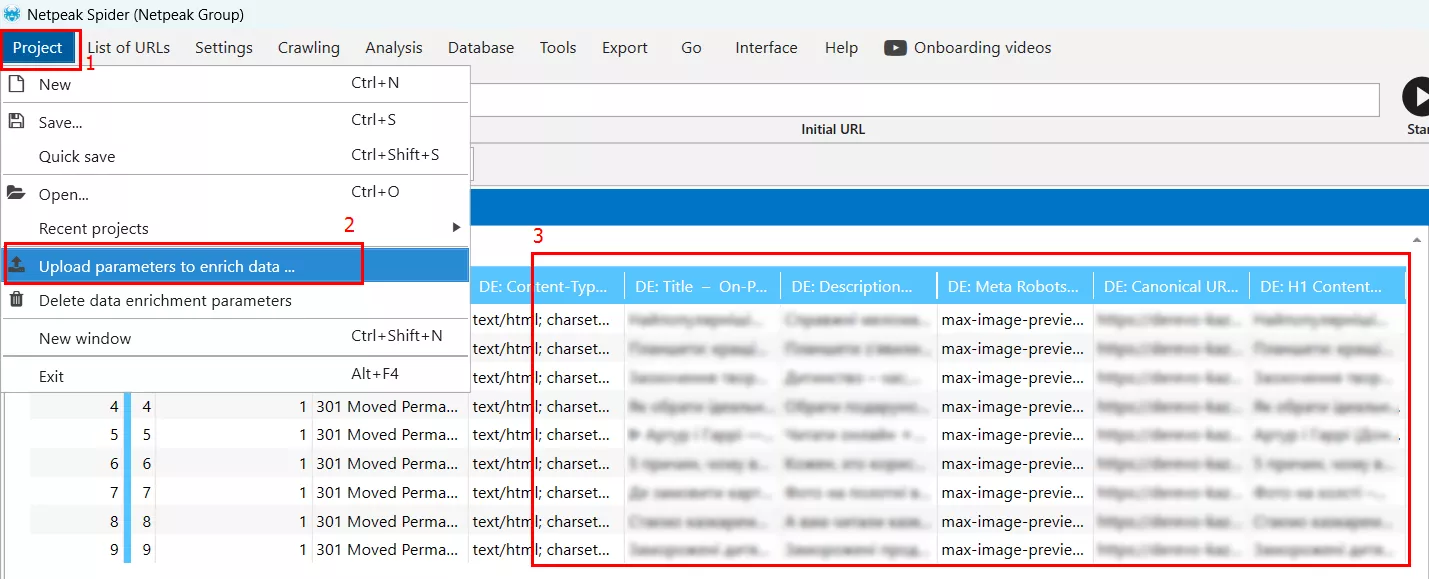

Integration with Netpeak Spider

Netpeak Checker allows you to transfer data to Netpeak Spider, supplementing its reports with new information, which makes website analysis more comprehensive and complete. To do this, export the Netpeak Checker data in CSV format and select the "Upload parameters to enrich data..." option in Netpeak Spider. All the data will be added to the metrics already available in the table:

For a full-fledged audit of your website, I recommend using a combination of both of these tools.

Conclusion

Netpeak Checker is a powerful, feature-rich website analysis tool that allows SEO managers, online marketers, and webmasters to analyze large amounts of data that are important for promoting their websites in organic search. Thanks to its intuitive interface, advanced features, and integration with other SEO tools, Netpeak Checker can easily become an indispensable tool for anyone looking to improve their website's performance and stay ahead of the competition.

To complement the diagnostic snapshots from Netpeak Checker and receive a full-fledged, prioritized report on your site’s SEO health, consider our SEO Audit Services. This service dives deeper into technical, structural, and content-related issues to help you move from data to decisive action.

Related Articles

How to Set Up Consent Mode in GA4 on Your Website with Google Tag Manager

Let's explore how to properly integrate consent mode in GA4, configure it for effective data collection, and at the same time comply with GDPR and other legal regulations

Display Advertising Effectiveness Analysis: A Comprehensive Approach to Measuring Its Impact

In this article, I will explain why you shouldn’t underestimate display advertising and how to analyze its impact using Google Analytics 4

Generative Engine Optimization: What Businesses Get From Ranking in SearchGPT

Companies that master SearchGPT SEO and generative engine optimization will capture high-intent traffic from users seeking direct, authoritative answers